Hello “IT”? It’s Me (Again)

This means the analyst has to call up someone in the IT department who must in turn take the time create a special script or other “hack” to make all the data play nice together. This is a short-term fix that ends up throwing a monkey wrench into the entire data extraction process.

Essentially:

The ETL process comes to a standstill. The folks in IT often have a lot on their plate and helping an analyst with their data extraction is usually not a very high priority. This means the analyst and his or her project must wait until the tech person has the time (and the brainpower) to devote to writing the script. In many cases, people working in IT are even unaware of what an ETL tool is or does.

The manual ETL fix can introduce errors. This is because these data-extracting fixit scripts are often created on-the-fly, and next week’s rules may be different from the previous request, especially if done by a different person. Essentially, the data analyst has to hope the IT person remembers to do the same thing they did last time.

The final analysis is flawed. When different rules are applied to new data (or the same set of data on different days) the resulting analysis can’t really be trusted. This may not even be noticed in the short term, but the generated report is essentially useless as it relies on inconsistent methodology.

This can lead to companies:

- Moving in the wrong strategic direction

- Unable to efficiently target their market

- Missing valuable information

- Wasting money

- Losing clients

- Firing analysts

- Filing for bankruptcy

Perhaps some of the above bullets are extreme, but the point is that so many of today’s businesses revolve around data analysis, and the process of mining it correctly is flawed. It relies too heavily on two groups of people (IT and analysts) that have other jobs to do but out of necessity have to work together.

ETL Troubles Are Everywhere

Companies often struggle with ETL and its related issues. It has been estimated 80% of the development time of Data Warehousing projects are spent on ETL. As technology has rapidly advanced, and businesses have gone global, the need for quality metrics and clean data has accelerated.

Of course, Excel has always been the “go to” software for most analysts and the companies for which they work for several decades. Excel was one of the first “point and click” spreadsheet software programs commercially available. Since it was created and sold by Microsoft, it quickly dominated the market, although ironically its first version was only available for Macintosh computers.

As the thirst for data has increased in today’s interconnected (or should we say “struggling to be connected”) world, there are a number of companies that have arisen, either offering add-ons to Excel or proprietary ETL software that attempts to solve the aforementioned problems.

The good news is this means instead of having to call up someone in the tech department, it is now possible for analysts to crunch data successfully alone. They can do this with these new applications that interact with a variety of standard databases and are designed for ease of use.

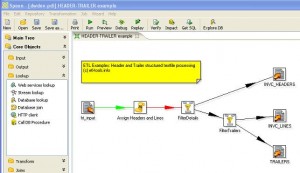

The slick, graphical user interface often gives them the ability to easily create or drag-and-drop scripts and the same rules can be automated and applied week after week, ensuring more reliable results.

Pentaho To The Rescue

Our favourite graphical ETL is Pentaho’s Data Integrator (PDI) for those reasons listed above and more. With PDI, the analyst now has complete control over the entire process from selecting and extracting source data, all the way to the final conclusions.

PDI makes sure the analyst doesn’t have to learn to code in SQL or write MapReduce Java functions – and neither will the IT guy. PDI also has highly advanced administrative features, so projects can be controlled, scheduled, and easily monitored. In fact, you can restart any ETL job and move backwards to find the failure, because every step in the transformation is clearly delineated in the GUI.

True Teamwork With Pentaho

Due to the intuitive nature of the software, it’s not difficult to implement over an entire team and the learning curve is within grasp of most analysts. It’s certainly easier than coding! And when working with others, it’s so much more efficient to transfer data than having to do it manually or, Heavens forbid, via Excel.

PDI is also scalable and can allow companies to work with larger pools of information. As one analyst joked “The simplest definition of “Big Data” is “it doesn’t fit in Excel.” PDI can do its work in real-time so you can instantly see the results.

Here are a few of its other great features:

- Blended at the source for secure, reliable data analysis and results

- Operational reporting for MongoDB, the leading NSQL database

- Customised dashboards are available via our design team

We have a free data integration kit that demonstrates how easy it is to automatically extract data from Salesforce.com on a regular basis and use it for analysis and reporting.

Download our Saleforce Integration kit.

Download the Salesforce Integration Kit

Thankfully, PDI has made it so there’s no longer a need to bother the technical department to perform functions beyond their job description or force analysts to worry about SQL. Instead, Pentaho’s Data Integrator takes care of it for them.

Zachary Zeus

Zachary Zeus is the Founder of BizCubed. He has more than 20 years' engineering experience and a solid background in providing large financial services with data capability. He maintains a passion for providing engineering solutions to real world problems, lending his considerable experience to enabling people to make better data driven decisions.